AI Risk Management: Approaches and Benefits

- Jack Sullivan

- Oct 3, 2023

- 4 min read

Updated: Apr 11, 2024

In the ever-evolving landscape of healthcare technology, where precision and reliability are paramount, we are committed to staying current and employing the latest guidelines and principles of risk management.

In this article, my aim is to demonstrate how we employ industry standard frameworks to address and mitigate AI risks in our development lifecycle and the operations of Biologit MLM-AI.

As inspiration for our approach, let us consider current guidance for the development of AI systems which state:

“FDA will assess the culture of quality and organizational excellence of a particular company and have reasonable assurance of the high quality of their software development, testing, and performance monitoring of their products.“ [FDA Good Machine Learning Practice]

To that end, our approach to the risk management of AI systems follows these tenets:

Risk management is integral to (and integrated into) our company-wide culture of quality

It is a continuous effort led by people that are most involved in the delivery and operations of AI systems

Adherence to high standards and continuous monitoring of emerging guidance and best practices

We have adopted the ISPE GAMP (Good Automated Manufacturing Practice) methodology throughout our entire development lifecycle, including AI development.

GAMP is a well known framework in the life sciences industry to ensure the high quality delivery of software systems. In particular, extensions of GAMP have been proposed by leading industry bodies with respect to AI, the work of Transcelerate Biopharma on AI validation being the one we use as reference.

With these resources we can execute a structured and systematic approach to identifying, assessing, and addressing risks throughout the AI development lifecycle by promoting the following flow:

Risk Identification

Understand the context, consult experts, and pinpoint potential risks like data quality, bias, privacy, and compliance.

We have established a cross-functional team with experts from quality management, data scientists and pharmacovigilance who meet regularly to discuss:

Ongoing risk mitigation efforts

Identification of new risks with respect to our usage of AI

Upcoming regulatory changes on the ethical usage of AI

Review of published and emerging risk frameworks, for example: the NIST AI Risk Management Framework

Quantitative evaluation of results gathered by continuous model monitoring to detect early signs of model drift or bias

Risk Assessment

Evaluate the likelihood and severity of each risk, considering its impact to users, platform operations and any regulatory implications.

Biologit performs impact assessment of risks using a combination of severity and likelihood where impact is determined by dialogue with subject matter experts and quantitative data gathered from monitoring model results

The output of risk assessment translates into action items prioritized during iteration planning. High-impact risks proceed straight to treatment

Risk Treatment

In this step, we develop strategies to introduce controls that remove or mitigate identified risks.

Risk treatment is managed holistically as most risks require a multi-faceted mitigation that can be spread across solutions such as:

Platform enhancements that clarify how the product interacts with AI models

Improvements to monitoring, validation or change management procedures

Updates to intended use and operating window documentation

Data labeling, model training and model parameter tuning

This approach allows us to craft AI systems that are not only advanced in functionality but are also aligned with the regulatory landscape and ethical considerations of the healthcare industry. Below are examples of risk controls implemented in Biologit MLM-AI:

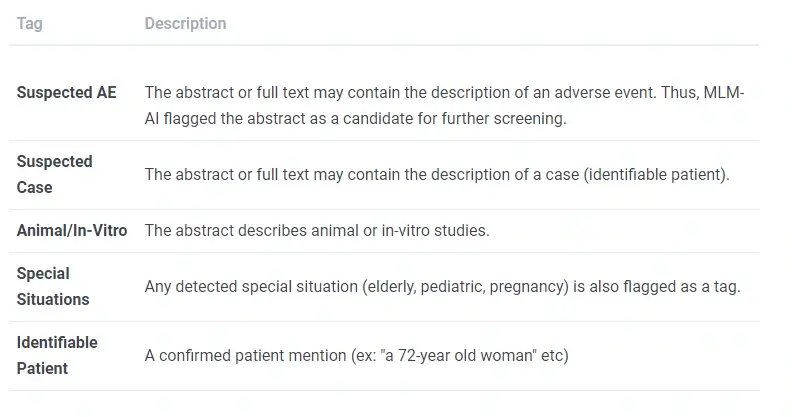

Sample risks and controls for the design of biologit MLM-AI (full table here)

Example: Addressing Intended Use Risks with Extensive Documentation

A common theme in the literature of good practices for AI in healthcare is ensuring adherence of AI to its intended use. For example, the FDA GMLP (Good Machine Learning Practices) guidance states:

“Users are provided ready access to clear, contextually relevant information that is appropriate for the intended audience (such as health care providers or patients) including: the product’s intended use and indications for use[...]”

To address intended use risks, we leverage clear and publicly available documents as a valuable risk control. Biologit MLM-AI documentation comprises of:

For users: product guides, online articles and talks

For technical teams and auditors:

Model fact sheets summarizing model specification and intended use

A Technical paper describing our approach with experimental results

Section from the Biologit model factsheet discussing intended use of the suspected adverse event model

Verification and Testing

Verify risk mitigation through rigorous testing across scenarios and ensure ethical adherence.

Many quality gates exist in our AI lifecycle to ensure that risks are mitigated and monitored:

Qualitative assessment of predictions performed by a domain expert, based on real-world sampled data

Bias assessments - in particular bias in relation to under-represented populations and how this could affect the quality of predictions

Statistical assessment - Model prediction statistics are reviewed by domain experts together with the data science team for specific real-world search data

Change Management - extensive testing to ensure model updates retain expected performance

Validation - functional testing of all functionality dependent on AI. AI testing is fully integrated into our computer systems validation procedures

This list can be added to or adjusted in light of new emergent risks.

Documentation and Reporting

All current and historical risks are tracked and documented fully in our risk registers, including a thorough description of the risk, its impact, assessment justifications and a log of ongoing discussions on treatment progress.

Periodic Review and Auditing

Oversight is also integral to a culture of quality: risks are periodically reviewed and the process is audited to ensure ongoing compliance to our standards. The risk management process integrates into our quality management system ensuring management oversight and the periodic auditing of activities.

Conclusions

Risk management is one of the most valuable quality processes to ensure the continuous improvement of our platform, processes and documentation. In this article we discussed approaches with examples of how we manage risk throughout the development lifecycle.

About biologit MLM-AI

biologit MLM-AI is a complete literature monitoring solution built for pharmacovigilance teams. Its flexible workflow, unrivalled scientific database, and unique AI productivity features deliver fast, inexpensive, and fully traceable results for any screening needs.

Contact us for more information on the platform and to sign up for a free trial.

Comments